Predicting home electricity usage based on historical patterns in Home Assistant

I use Home Assistant for automation, so the solution described uses Home Assistant's sensor configuration and database layout, however the overall idea should be more widely applicable.

I've been trying to minimise what we pay for electricity at home, by optimising the times at which e.g. house battery charges or water heats up. To do that it's useful to be able to predict future load. Why does this help ? Imagine a situation where you want to decide if to charge battery from grid, and by how much. If current battery charge will fully cover your predicted load until e.g. sun comes up and gives you solar you're golden, but if not it might make sense to charge from the grid.

As an aside, I am also in the middle of writing optimizer software for solving the whole problem (solar, battery, heat pump setup) using MILP solver but this is definitely a separate post.

As I'm on a tariff where price changes every half hour, I would like the resolution of the prediction also be in half hourly slots so that we can also predict the cost of future electricity usage.

Our house load differs quite a bit based on the time of the day as well a day of the week, weekends being much different than the working days etc. Idea is to use historical data, find what happened in the half hourly slot we're interested in in the past, e.g. if we're interested in load prediction for 9:30-10:00 for a Wednesday morning, let's look at previous 9:30-10:00 slots on previous Wednesdays and average them out, with most recent being the most important. I then blend it with the respective slots from last 2 days, just to catch any new emerging patterns, sometimes we have longer running loads that we switch on very ocasionally.

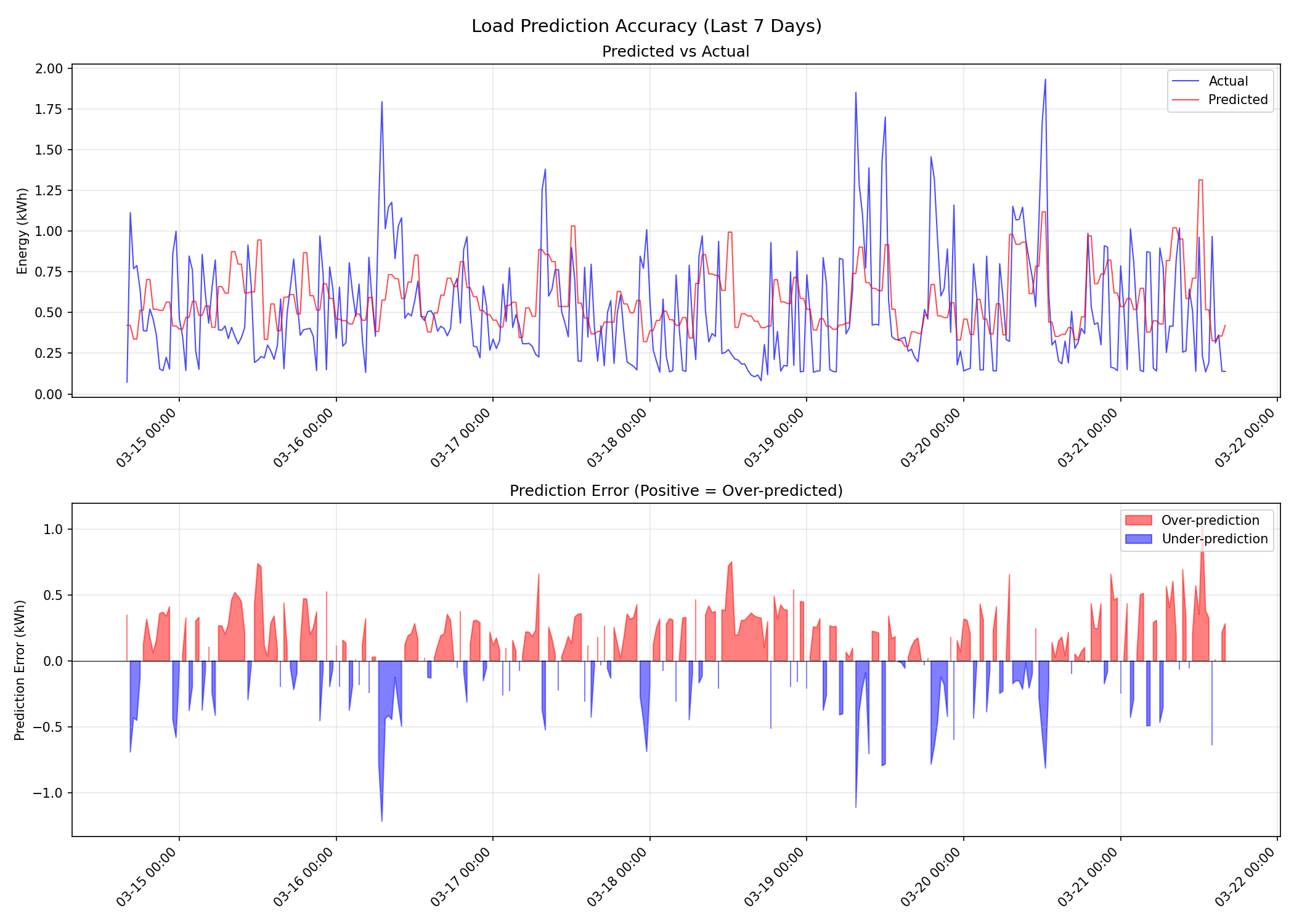

What follows is what I settled on after a bit of tuning - the weights are what worked for my load patterns but as you can see this week had more load overall, so we're underpredicting in some cases. Here's last week of data, if you squint you can see that the overall shape is correct, with some outliers.

And here's the implementation - I'm using the statistics table in addition to the states table because states typically only have a week of data.

statistics are only kept with one data point per hour unfortunately so we need to extrapolate half hourly from that.

Feel free to take this code and play with it - adjust the weights and underlying sensor name.

Not sure how idiomatic the output format is, maybe there's a better way, but I am parsing it in a custom piece of code separately anyway so does not make much difference to my usecase. The output is effectively a lookup table for the predicted load at different days of the week and times, as computed using data from the last 8 weeks; where you can ask it for a day of the week and a slot, so e.g. 0_09:00 is 9:00-9:30 on Sunday.

# Day-of-week-aware half-hourly total load, recency-weighted with recent-day blending.

# Returns a week of entries (336) keyed by "{dow}_{HH:MM}" (e.g. "0_09:00" = Sunday 09:00).

# Uses a hybrid approach:

# - Last 7 days: States table with trapezoidal integration (high precision)

# - 7-56 days: Statistics table with hourly data divided by 2

# Blends: 65% DOW weighted average + 25% yesterday same slot + 10% 2-days-ago same slot.

# OUTPUT: kWh per half-hour slot (predicted energy consumed during that 30-minute period).

- name: "predicted half hourly load by day of the week"

unique_id: "half_hourly_load_by_dow_sql"

query: >

WITH

recent_raw AS (

SELECT

strftime('%w', last_updated_ts, 'unixepoch', 'localtime') as dow,

strftime('%H', last_updated_ts, 'unixepoch', 'localtime') as hour,

CASE

WHEN CAST(strftime('%M', last_updated_ts, 'unixepoch', 'localtime') AS INTEGER) < 30

THEN '00' ELSE '30'

END as minute,

DATE(last_updated_ts, 'unixepoch', 'localtime') as date,

last_updated_ts as ts,

CAST(state AS FLOAT) as power_w

FROM states s

INNER JOIN states_meta sm ON s.metadata_id = sm.metadata_id

WHERE sm.entity_id = 'sensor.growatt_invpowertolocalload'

AND s.state NOT IN ('unknown', 'unavailable', '')

AND CAST(s.state AS FLOAT) BETWEEN 0 AND 15000

AND last_updated_ts >= unixepoch('now', '-7 days')

),

with_intervals AS (

SELECT

dow, hour, minute, date, ts, power_w,

LAG(ts) OVER (PARTITION BY dow, hour, minute, date ORDER BY ts) as prev_ts,

LAG(power_w) OVER (PARTITION BY dow, hour, minute, date ORDER BY ts) as prev_power_w

FROM recent_raw

),

interval_areas AS (

SELECT

dow, hour, minute, date,

((power_w + prev_power_w) / 2.0) *

((ts - prev_ts) / 3600.0) as interval_wh

FROM with_intervals

WHERE prev_ts IS NOT NULL

AND (ts - prev_ts) < 300

),

recent_integrated AS (

SELECT

dow,

hour || ':' || minute as time_slot,

date,

SUM(interval_wh) / 1000.0 as kwh_per_slot,

COUNT(*) as interval_count

FROM interval_areas

GROUP BY dow, hour, minute, date

HAVING COUNT(*) >= 3

),

older_hourly AS (

SELECT

strftime('%H:00', start_ts, 'unixepoch', 'localtime') as hour_slot,

strftime('%w', start_ts, 'unixepoch', 'localtime') as dow,

DATE(start_ts, 'unixepoch', 'localtime') as date,

state as energy_kwh,

LAG(state) OVER (ORDER BY start_ts) as prev_energy_kwh

FROM statistics s

INNER JOIN statistics_meta sm ON s.metadata_id = sm.id

WHERE sm.statistic_id = 'sensor.growatt_localloadenergytotal'

AND s.state IS NOT NULL

AND start_ts >= unixepoch('now', '-56 days')

AND start_ts < unixepoch('now', '-7 days')

),

older_half_hourly AS (

SELECT

dow,

hour_slot,

date,

(energy_kwh - prev_energy_kwh) / 2.0 as kwh_per_slot

FROM older_hourly

WHERE prev_energy_kwh IS NOT NULL

AND energy_kwh > prev_energy_kwh

AND (energy_kwh - prev_energy_kwh) BETWEEN 0.025 AND 5.0

),

older_expanded AS (

SELECT dow, hour_slot as time_slot, date, kwh_per_slot FROM older_half_hourly

UNION ALL

SELECT dow, SUBSTR(hour_slot, 1, 3) || '30' as time_slot, date, kwh_per_slot

FROM older_half_hourly

),

combined_data AS (

SELECT dow, time_slot, date, kwh_per_slot, 'states_integrated' as source

FROM recent_integrated

UNION ALL

SELECT dow, time_slot, date, kwh_per_slot, 'statistics' as source

FROM older_expanded

),

dow_ranked AS (

SELECT

dow, time_slot, date, kwh_per_slot,

ROW_NUMBER OVER (PARTITION BY dow, time_slot ORDER BY date DESC) as rn

FROM combined_data

WHERE kwh_per_slot BETWEEN 0.01 AND 5.0

),

dow_weighted AS (

SELECT

dow, time_slot,

CASE

WHEN COUNT(*) >= 3 THEN ROUND(

SUM(kwh_per_slot * CASE rn

WHEN 1 THEN 0.40 WHEN 2 THEN 0.25 WHEN 3 THEN 0.15

WHEN 4 THEN 0.10 WHEN 5 THEN 0.06 WHEN 6 THEN 0.04 ELSE 0 END) /

SUM(CASE rn

WHEN 1 THEN 0.40 WHEN 2 THEN 0.25 WHEN 3 THEN 0.15

WHEN 4 THEN 0.10 WHEN 5 THEN 0.06 WHEN 6 THEN 0.04 ELSE 0 END), 4)

WHEN COUNT(*) >= 1 THEN ROUND(AVG(kwh_per_slot), 4)

ELSE 0.15

END as dow_avg_kwh

FROM dow_ranked

WHERE rn <= 6

GROUP BY dow, time_slot

),

yesterday_integrated AS (

SELECT

hour || ':' || minute as time_slot,

SUM(interval_wh) / 1000.0 as kwh

FROM (

SELECT

strftime('%H', last_updated_ts, 'unixepoch', 'localtime') as hour,

CASE

WHEN CAST(strftime('%M', last_updated_ts, 'unixepoch', 'localtime') AS INTEGER) < 30

THEN '00' ELSE '30'

END as minute,

((CAST(state AS FLOAT) + LAG(CAST(state AS FLOAT)) OVER (ORDER BY last_updated_ts)) / 2.0) *

((last_updated_ts - LAG(last_updated_ts) OVER (ORDER BY last_updated_ts)) / 3600.0) as interval_wh

FROM states s

INNER JOIN states_meta sm ON s.metadata_id = sm.metadata_id

WHERE sm.entity_id = 'sensor.growatt_invpowertolocalload'

AND DATE(last_updated_ts, 'unixepoch', 'localtime') = DATE('now', 'localtime', '-1 day')

AND s.state NOT IN ('unknown', 'unavailable', '')

AND CAST(s.state AS FLOAT) BETWEEN 0 AND 15000

) sub

WHERE interval_wh IS NOT NULL

GROUP BY hour, minute

),

twodaysago_integrated AS (

SELECT

hour || ':' || minute as time_slot,

SUM(interval_wh) / 1000.0 as kwh

FROM (

SELECT

strftime('%H', last_updated_ts, 'unixepoch', 'localtime') as hour,

CASE

WHEN CAST(strftime('%M', last_updated_ts, 'unixepoch', 'localtime') AS INTEGER) < 30

THEN '00' ELSE '30'

END as minute,

((CAST(state AS FLOAT) + LAG(CAST(state AS FLOAT)) OVER (ORDER BY last_updated_ts)) / 2.0) *

((last_updated_ts - LAG(last_updated_ts) OVER (ORDER BY last_updated_ts)) / 3600.0) as interval_wh

FROM states s

INNER JOIN states_meta sm ON s.metadata_id = sm.metadata_id

WHERE sm.entity_id = 'sensor.growatt_invpowertolocalload'

AND DATE(last_updated_ts, 'unixepoch', 'localtime') = DATE('now', 'localtime', '-2 days')

AND s.state NOT IN ('unknown', 'unavailable', '')

AND CAST(s.state AS FLOAT) BETWEEN 0 AND 15000

) sub

WHERE interval_wh IS NOT NULL

GROUP BY hour, minute

),

blended AS (

SELECT

dw.dow, dw.time_slot,

CASE

WHEN yp.kwh IS NOT NULL AND tp.kwh IS NOT NULL THEN

ROUND(dw.dow_avg_kwh * 0.65 + yp.kwh * 0.25 + tp.kwh * 0.10, 4)

WHEN yp.kwh IS NOT NULL THEN

ROUND(dw.dow_avg_kwh * 0.75 + yp.kwh * 0.25, 4)

WHEN tp.kwh IS NOT NULL THEN

ROUND(dw.dow_avg_kwh * 0.90 + tp.kwh * 0.10, 4)

ELSE dw.dow_avg_kwh

END as avg_kwh_per_slot

FROM dow_weighted dw

LEFT JOIN yesterday_integrated yp ON dw.time_slot = yp.time_slot

LEFT JOIN twodaysago_integrated tp ON dw.time_slot = tp.time_slot

)

SELECT

'{' ||

GROUP_CONCAT(

'"' || dow || '_' || time_slot || '":' || ROUND(avg_kwh_per_slot, 4),

','

) ||

'}' as result

FROM blended

ORDER BY dow, time_slot;

column: "result"